Artificial intelligence (AI) has revolutionized digital creativity at a dizzying speed in the last 3-5 years.

What used to take decades now happens in months, but it has also unleashed a new frontier of deception and fraud. With the ability to generate virtually realistic images and mimic voices in seconds, it’s no surprise that AI tools are now being exploited by scammers to fabricate events, impersonate loved ones, manipulate emotions and defraud the innocent – all with a calculated precision.

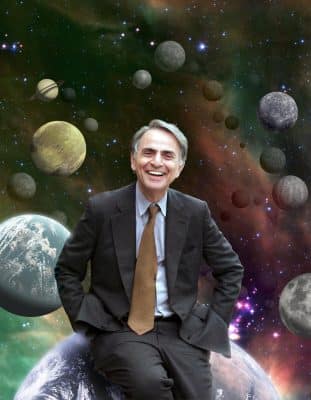

Unlike traditional photo editing, such as with Adobe Photoshop, which required time and a certain level of technical skill, today’s AI platforms allow virtually anyone to produce convincing visuals using simple text or vocal prompts. One chilling example shared by computer software expert Leo Notenboom of the Internet’s “Ask Leo” fame, involved an exact image of Notenboom’s car (including his license plate) parked outside a downtown strip club, its neon sign glowing in the background. The photo, complete with the correct license plate, was entirely fabricated by Leo himself using AI. Though this incident was created for the purpose of illustration, the image was realistic enough to potentially damage a reputation and relationship if used nefariously. Scammers often obtain personal data through shady online services, by scouring social media or searching the dark web, making such targeted attacks increasingly feasible.

But the threat doesn’t stop at still images. According to the NYC Department of Consumer and Worker Protection (DCWP), scammers are now using AI for voice cloning and deepfake videos. In voice-cloning scams, fraudsters impersonate family members using audio lifted from social media (or even your “I’m away from the phone” message on your answering machine), often calling with urgent pleas for money. A recent study by McAfee showed that one in four people has experienced or knows someone who has experienced a voice cloning attack, with 77% losing money as a result. Deepfake scams involve altered videos where sometimes obvious cues – like strange eye movement, incongruent facial features and unusual lighting – may hint at manipulation, but the emotional impact can still be profound. These scams can bring about social and emotional trauma, and scammers typically demand payment through hard-to-trace methods, such as wire transfers, gift cards, or cryptocurrency, making recovery nearly impossible. According to the deepfake detection platform Resemble AI, deepfake-enabled fraud caused over $200 million in financial losses during the first quarter of 2025 alone.

To safeguard against these evolving threats, DCWP offers several practical tips:

- Verify identity: If you receive a call from a family member urgently demanding money, verify their identity by asking questions only your real loved one would know.

- Don't trust caller ID, including calls using government agencies (IRS, Social Security, Medicare) which can easily be spoofed. Instead, call the institution using a verified number.

- Pause before reacting to urgent requests by text, phone or email. Scammers rely on panic to override judgement.

- Limit personal content shared on social media (videos, reels, names, etc.), which can be mined for voice samples and private details.

- Report scams to the Federal Trade Commission (reportfraud.ftc.gov) to help prevent future fraud.

As AI continues to evolve, so must our ability to critically evaluate the media we consume. If an image or video seems too perfect, too shocking, too bizarre or emotionally manipulative, it's worth questioning its authenticity. In this new digital landscape, skepticism is not cynicism -- it's self-defense.

Source:

CalRTA Contact, Volume 41, Issue 2, Fall 2025, a membership publication of the California Retired Teachers Association, of which your Editor is, happily, a member. Reprinted with permission of author.

This article draws from Leo A. Notenboom's "Faking Reality: How AI Images are Being Used to Scam You" and consumer protection guidance from the NYC Department of Consumer and Worker Protection

Author:

Sue Breyer has been CalRTA's Communications and Technology Chair since 2021, and has been the driving force behind numerous "how-to" videos for members.